Improving medical imaging with direct radiography

Innovations in imaging technology

Traditional analog X-ray imaging systems use special photographic film as the medium to convert passed X-rays into a visible image. In order to accomplish that task, the film must undergo a chemical development process that can take several minutes, delaying the start of patient treatment. Moreover after the development process is complete, the medical team could discover that the image needs to be re-taken due to improper X-ray exposure. After processing, the images must be physically handed to the attending physician then stored with the patient’s records, which can accumulate into vast storage closets at medical offices. Additionally, the chemicals used in the development process have a finite useful life and must be carefully stored and disposed of once exhausted. All these challenges disappear with Direct Radiography (DR), a growing form of digital X-ray imaging.

The migration away from traditional X-ray imaging towards Direct Radiography is gaining momentum as the initial ownership costs decrease and benefits become more apparent. The DR X-ray image is available within seconds after exposure of the patient and can be distributed immediately around the globe for consultation with medical specialists anywhere. In a digital format, patient images can be archived and retrieved quickly in small hard disk drives instead of large file closets. A popular DR method involves a flat panel detector plate, capturing the passed X-rays. The flat panel detector enables multiple images, showing different angles to be shot without ever having to move or touch the panel and without lens distortion with a 1:1 sensor to image size ratio. Newer flat panel X-ray detectors can wirelessly transmit the image to the control unit for viewing, archiving and distribution. No longer do the process chemicals associated with film have to be purchased, stored or discarded. Perhaps most importantly, two European studies indicate a 30 percent-70 percent reduction in the X-ray dosage required to achieve a DR image quality comparable to that of analog film. Some flat panel designs communicate the exposure rate to the X-ray source in real time guaranteeing a properly exposed image with the minimum radiation dosage. A lower X-ray dose improves the safety of the patient and the health care professional in the vicinity who may be subsequently hit by scattered X-ray particles.

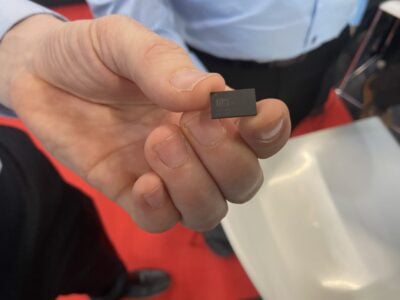

To create the image, many Direct Radiography systems use a full frame flat panel detector constructed of CMOS sensors covered with by a scintillating layer. This layer converts the incident X-rays to a wavelength better absorbed by silicon. CMOS sensors, often favored as the manufacturing process, are compatible with the construction of mixed signal and logic architectures, promoting a more integrated solution. The trend towards Direct Radiography is further incentivized by improvements in 200mm and 300mm silicon wafer manufacturing. Larger wafers enable fewer CMOS sensor modules to be combined to form an X-ray flat panel sensor conforming to the 1.5cm thick ISO-standard 35cm x 43cm (14 x 17 inch) X-ray film cassette size used in hospitals worldwide. It’s no surprise that the hardware design of the system plays a significant role with a direct influence on image quality, form factor, human safety and operating lifetime of these products. But does that include the power management components?

The tough battle against electronic noise

In order for Direct Radiography to realize all potential benefits, attention must be paid to the issues of electronic noise, heat and size. A high signal to noise ratio (SNR) must be maintained, while reducing the X-ray dosage applied to the patient is a key goal. Although the noise performance of the sensor itself gets much of the attention, noise injection from the power supply also deserves careful consideration.

The power supply architecture has a direct influence on SNR performance. Voltage ripple on the power supply rail fed to the image sensor and the A/D converter can inject noise into the image. X-ray CMOS sensor makers are touting 14-bit and even 16-bit A/D conversion, supporting a wide contrast range to generate highly detailed images. Complicating matters further, a regulated negative rail between -3.3V to -7V is commonly required in addition to a regulated positive voltage to operate the image sensor, A/D converter and/or instrumentation amplifiers. Still, the battery pack or AC/DC power supply may only provide a single unregulated positive voltage. Therefore, the intermediary DC/DC converter must have a low output ripple performance in the tens of millivolts, high operating efficiency and low self heating.

For patient comfort and convenience, many new X-ray imaging units, including the sensor panels are mobile. A 12V nominal re-chargeable battery is often selected as the power source for the sensor panel. In order to capture and transmit hundreds of images on a single charge, high operating efficiency is required, favoring the use of switching regulators. Unfortunately, switch mode regulators present a source of radiated electromagnetic inference (EMI), increasing the noise level in the system. Additionally, to help medical staff maintain a safe perimeter from the patient, some X-ray sensor panels feature wireless data transmission. High levels of EMI could distort the captured image and/or disrupt wireless data transfer to the user terminal. Perhaps even more troubling, EMI emissions could reach levels above those allowed by government regulatory agencies, preventing the medical product from entering the market, a topic discussed later in this article.

The requirement for high operating efficiency serves a second purpose in the effort to maintain high signal to noise ratio (SNR). The dark current within a CMOS sensor increases exponentially with temperature. Dark current is the movement of charge, which exists prior to X-ray exposure. According to one X-ray CMOS sensor manufacturer, dark current roughly doubles for every 8°C temperature rise. Although post-processing can remove some dark current artifacts from the image, higher operating temperatures and accumulating damage from repeated X-ray exposure hastens the increase in dark current. Eventually, the dark current will overwhelm the charge deposited on the sensor from incident X-ray particles at which point the flat panel detector must be replaced. Furthermore, since medical devices are often in contact with human tissue, unmanaged heat dissipation can lead to patient discomfort or burns in addition to reducing equipment operating lifetime.

The battle against heat

As previously mentioned, high operating temperatures degrade the SNR performance of the CMOS sensor and reduce its lifetime. Moreover, high operating temperatures pose a risk to patient safety. To maintain superior image resolution, the X-ray flat panel detector is placed in direct contact with the patient’s body. Human skin starts to suffer burns at temperatures as low as 40°C (100°F). Therefore, the exterior of any medical device that could potentially come in contact with skin must stay below this limit. Thus, high operating efficiency and the ability to spread the heat that is generated over a wide area is critical in multiple areas: sensor lifetime, image clarity and patient safety.

Keeping that slim figure

From surgical system attachments to handheld examination tools, the increasing complexity of next- generation medical devices is at odds with the space available to fit the very components, supporting these additional capabilities. In the case of a flat panel X-ray detector, existing hospital infrastructure has already allocated a fixed-sized slot known as a bucky slot where the analog X-ray film cassette was formerly located. These film cassettes generally follow ISO4090 guidelines, permitting external dimensions of 46cm x 38.6cm x 1.5cm for an X-ray image size of 43cm x 35cm (14 x 17 inches). A suitable power management solution needs to be compact and efficient to comply with such restrictive size limitations and minimize operating temperature rise.

Regulatory compliance

As part of the regulatory requirements in the US and Europe, medical devices must be proven to be compliant with CISPR11, also known as EN55011. Since switching regulators radiate electromagnetic fields, the designer must gain a full understanding of the switching regulator’s impact on EMI compliance or select a power solution that is tested to meet radiated EMI limits by the manufacturer. Otherwise, expensive and time consuming product iterations could result in order to achieve compliance. The most stringent radiated EMI limits are assigned to medical equipment intended for use in office buildings, Group1 – Class B devices whose radiated limit is identical to EN55022 Class B (CISPR22 Class B) limits assigned to information technology equipment intended for use in office buildings and homes.

A long product lifetime

A power solution with proven reliability is a necessity for medical devices. In the case of an X-ray panel sensor, the panel must capture the image correctly the first time otherwise the patient and health care professional face a lamentable repeated radiation exposure. At minimum, a delayed diagnosis causes a delay in the start of treatment, an unacceptable situation in modern medical standards.

Another factor to consider is how long will the electronic components selected be available? After a lengthy regulatory approval process enduring CE, UL, IEC and FDA hurdles, each electronic medical device should be manufacturable for a long period of time – upwards of 15 years. This length of time is much longer than the consumer product cycles many power management semiconductor vendors service as their primary market. Product requalification solely due to obsolete components is a burden to engineering resources and the bottom line.

Solution: Advanced DC/DC switching regulators

To assist design engineers address the challenges of electronic noise, heat and size in medical applications, Linear Technology offers a broad selection of over fifty different µModule® power products. Each of these products is a highly efficient, fully integrated DC/DC switching power management solution in a compact surface mount package (Figure 1). These switch mode regulators were carefully designed to operate with low output ripple in both negative (inverting) output voltage and positive output voltage circuit configurations as shown in Figures 2 & 3. A sub-group of µModule power products, the EN55022 class B certified µModule regulators, present an ideal solution to overcome the EMI challenges found in medical applications. These switching regulators were certified by independent laboratories such as TUV to meet the industry’s EN55022 class B (equivalent to CISPR11 / EN55011 Group 1 – Class B) standard for radiated EMI at output currents up to 8A. The test results using their respective standard demo boards are publicly available online. An excerpt is shown in Figure 4. Selecting a known compliant fully integrated step-down solution such as a µModule regulator, reduces design time and risk associated with common switching regulators or controllers in meeting these requirements.

Figure 1: µModule power products are a complete DC/DC switching solution in a thermally enhanced LGA or BGA package, providing a convenient means to dissipate heat through the top & bottom of the package

Figure 2a

Figure 2b

Figure 3: LTM4613 has just 26mVpp output ripple (12VIN to 5VOUT @ 5A) to minimize noise injection into the CMOS sensor & signal conditioning components for a high quality image

The risk associated with high output ripple and radiated EMI should not be underestimated. These two factors impact a product’s ability to function properly the first time and meet strict government regulations. Recalling their impact on a X-ray flat panel’s operation, product designs with well controlled output ripple and EMI emissions deliver high signal to noise ratio for a high quality high resolution image, reliable first time right image capture to avoid delays in treatment and unnecessary repeat radiation exposure, reliable wireless communication and speed EMI compliance testing. For these reasons, Linear Technology took great care in ensuring these devices were certified by independent test laboratories such as TUV with the tests results made publicly available online. After overcoming noise and EMI concerns, the right power management solution needs to address the issues of efficiency, reliability and thermal dissipation.

µModule power products are highly efficient switching regulators offered in a surface mount LGA or BGA package constructed of thermally conductive plastic with a flat top. A single flat top covering a complete power management solution is conducive to heat sinking techniques to minimize the temperature rise at any particular point of the medical device’s exterior (Figure 5). As mentioned previously, maintaining a cool operating temperature improves patient safety, signal to noise ratio and equipment operating lifetime. With the largest µModule power products measuring 15mm x 15mm x 5mm and the smallest measuring 6.25mm x 6.25mm x 2.3mm, space is freed up for more important features such as increased battery size for longer operating time between charging.

All µModule regulators undergo extensive device level and board level testing to ensure reliable operation. To date, the product family has accumulated over 23.5 million device-hours of power cycling and 3 million device-hours of accelerated life testing without failure. Reliability is further backed by a complete suite of design, application and manufacturing guidelines to ensure their performance meets product expectations. A complete report listing all reliability tests performed and the results are posted for review at www.linear.com/umodule. In support of medical devices with long production lifetimes, Linear Technology has a strong track record for non-obsolescence of products, a policy that continues with the µModule power product line.

Conclusion

Although this article has focused on design challenges with respect to digital X-ray flat panel detectors, the challenges described here are not unique to this product. From surgical system attachments to endoscopes to portable imaging and monitoring systems, medical professionals are always looking for the most effective, reliable and smallest tools available. Equipment pushing these boundaries enables advancements in medical procedures that minimize patient discomfort, scarring, and recovery times, while improving personal and personnel safety. Additional electronics in such products enable doctors to be more precise, have better vision and control, and even extend the careers of highly trained surgeons. Coupled with the business demands of limited engineering resources, time to market, proven product reliability with a long production lifetime to match, what power solutions can meet these requirements? Linear Technology’s line up of µModule power products are carefully designed and have been proven to meet a large combination of demands.

About the author:

Willie Chan is Sr. Product Marketing Engineer, uModule Power Products at Linear Technology Corporation

Acknowledgements:

Jaino Parasseril

Yan Liang

Jason Sekanina

Dongyan Zhou

Read also:

Choosing the right EMS partner for medical apps

Sensor fusion and MEMS for 10-DoF solutions

Wireless connectivity protocols for embedded systems

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News

If you enjoyed this article, you will like the following ones: don't miss them by subscribing to :

eeNews on Google News